2025-10-10 # LLMs Are Transpilers

It is no great secret that LLMs can interpret and write program code. Companies and universities alike are seeking to adopt LLMs (aka "AI"), perhaps with the vision they will revolutionize the way we design and write our program code. It is with great disgust that certain computer-science courses in my college require me to use an LLM to "upgrade" or write "superior" code for certain class assignments. Due to this, I aim to produce yet another argument on the topic of AI.

The Dilemma

As far as I can see, there exist two main beliefs about using LLMs in programming:

- "AI helps me write code more quickly, so I can get my projects done faster."

- "The code which AI produces is buggy, hard to maintain, and often pedantic."

Which is the right train of thought? Arguments for both can involve real-world examples of code, project timelines, defamation of the other opinion... Anything goes. In reality, I've found it most helpful to think about LLMs as a form of compiler.

Compilers?

Yes, you read that right. Large Language Models act as a form of compiler. More specifically, a transpiler. Both LLMs and source code Compilers share the following characteristics:

- Transforming an "input language" to an "output language".

- Increased information often results in more optimal output.

- Relies on well-formed input to produce some well-formed output.

The main difference between the two however, is that an LLM can recieve an extremely flexible range of input, at the cost of producing a non-conforming output. You can ask AI to make you a SaaS in python - and yes, it will do it's best to accomplish that - yet the output will contain many decisions that you have not made. These decisions could be as simple as "I'll use this font instead of that font," or could be as complex as "I'll make it an Email SaaS, instead of a SaaS for file hosting."

Entropy

Entropy is loosely defined as the amount of "information" that something has. When you write the majority of an app in the x86 assembly language, (such as RollerCoaster Tycoon,) you are providing detailed and intimate information about how the target machine should copy information around, modify and make decisions on this information, and produce some output or effect based on this information. Likewise with a high level language - such as JavaScript - you trust the compiler to make many of these simpler decisions for you, trusting that the program semantics are preserved across translation.

LLMs can accept some input "source code" in any level of abstraction - from decompiling machine code to generating SQL code. This is a very powerful, yet practically broken ability. The reason we can trust high-level language compilers is because we know they will perserve program semantics, yet we can never guaruntee that with our current generation of LLMs. Already we are seeing many different bugs, and in some cases, even purposely offensive code generate by LLMs. We cannot trust LLMs with our high-level code.

Overhead

LLMs have the potential to work with ultra-high, and ultra-low level input. It can translate English - and lower level code - and for the most part, produce output which appears correct. In most cases, this output even compiles on the first try! However... to use an LLM, you still need to tell it what you want. If you are specific, you need to describe your requirements in some lower-level input language. If you don't care about specifics, you can use higher-level English.

The issue is that real-world projects require specifics that can't be explained by one or two sentences. When we ask an LLM to produce a SaaS for us, we have strict requirements that are better expressed in a design document or real code. To get the same quality code out of an LLM as a human would produce, we need to put the same effort into the prompt as we would the code itself. In other words, the entropy is the same regardless of if we are using C, or English. At that point, why have the middle-man? Using AI will slow you down.

Familiarity

I often find myself wondering why so many people claim success from using AI. Reports leave little doubt that it cripples 95% of the companies that adopt it. Well, there is a catch. If you don't know how to work with C, Haskell, or Lean4 - but you do know some other language (even spoken languages, like English), then AI can indeed improve your workflow, while it would likely hurt your workflow otherwise:

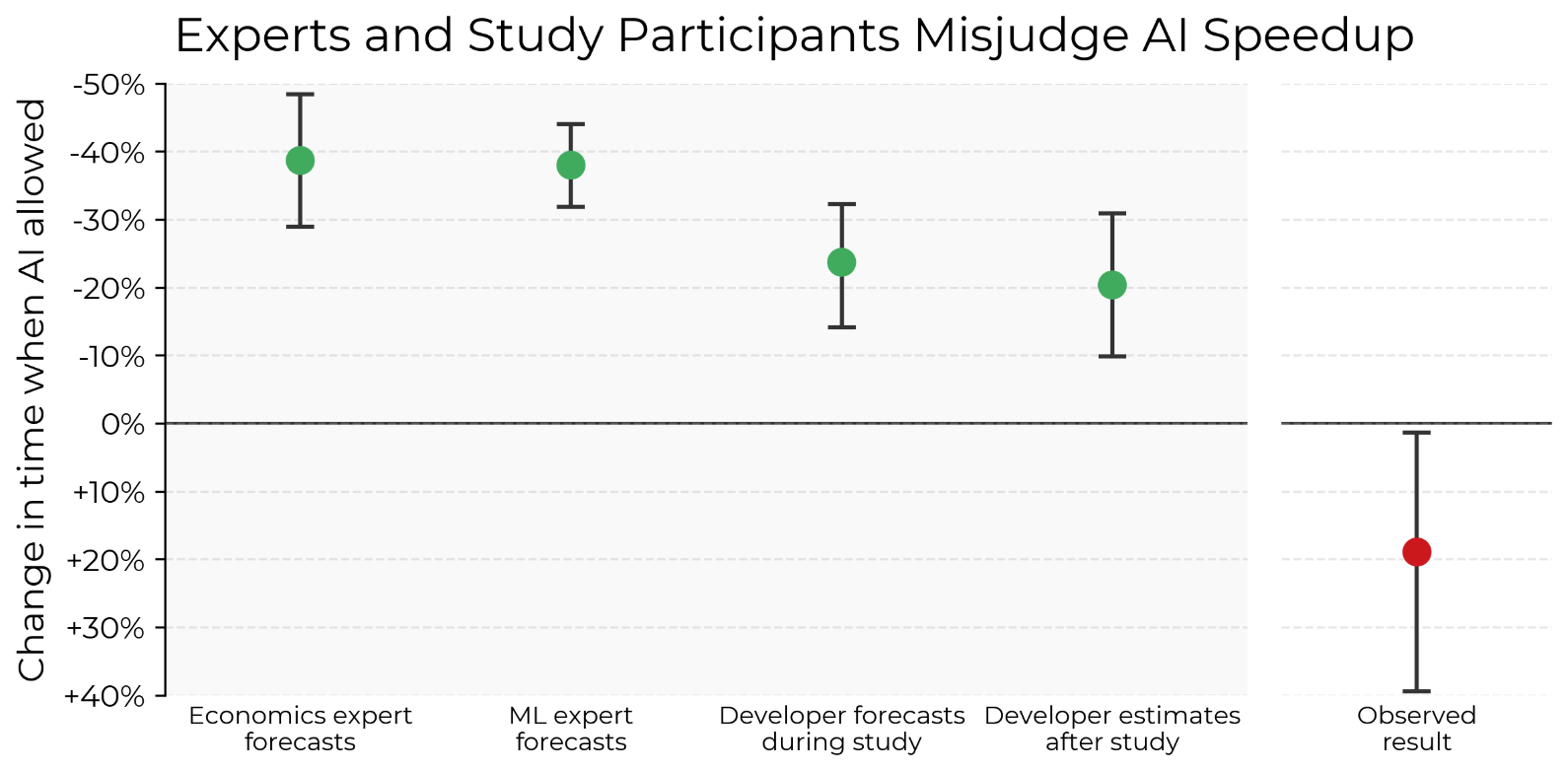

Figure 1: Experts and study participants (experienced open-source contributors) substantially over-estimate how much AI assistance will speed up developers—tasks take 19% more time when study participants can use AI tools like Cursor Pro. See Appendix D for detail on speedup percentage and confidence interval methodology.

The code is what ultimately matters. If an LLM can translate your English into better code than you would write directly, then you should consider using it. Likewise, if you find your familiarity of code to be based solely on the target language, while the English input is overly simple or hard to follow, then you should consider not using an LLM.

Conclusion

LLMs ingest diverse and unstructured input, at the cost of producing potentially incorrect output. The same amount of effort is required whether you are using a traditional programming language, or an LLM, if your requirements match the target abstraction level. If you are experienced in the target language, you gain little - and lose much - from using an LLM to write your code.

When a college course requires me to use an LLM to "upgrade" or write "superior" code for certain class assignments, I protest - and rightly so. I aim to understand my code, and grow because of it. You can choose to use an LLM to write your code, I don't care. Just don't force me to do the same.